Why your model never says “I don’t know” — and how to force it

I once used a stat in a LinkedIn post that AI gave me with complete confidence. Views, likes, comments. Felt great. Then someone asked me for the source. I went back to check. The stat didn’t exist. AI had just… made it up. And I had put my name on it.

That’s when I stopped treating it like an expert and started treating it like what it actually is — a fast, eager intern who would rather guess than say “I don’t know.” Everything about how I prompt it changed after that. This is what I do now.

How To Make AI Admit “I Don’t Know”

AI sounds confident even when it’s guessing. Treat it like a cautious intern.

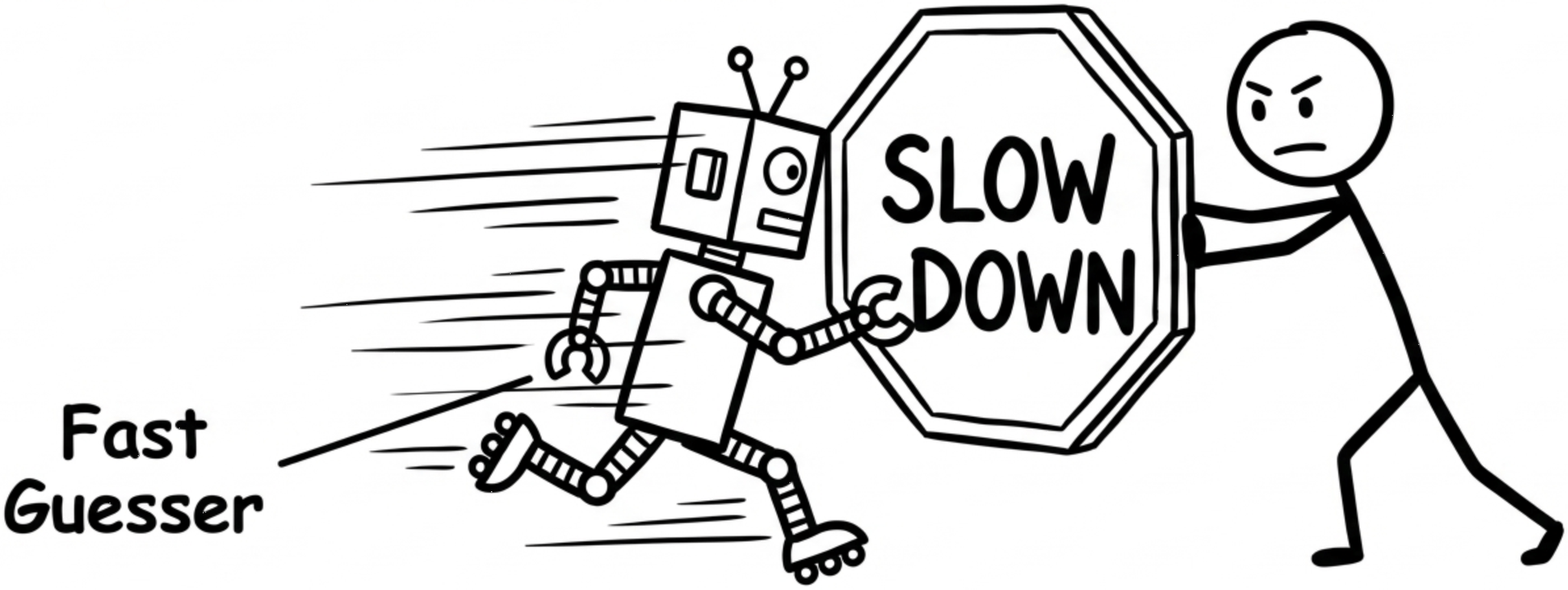

From Oracle To Intern

AI is a fast guesser, not a careful expert. Your job is to slow it down.

That confident answer AI just gave you is mostly a best guess. These models are built to keep the text flowing in a way that sounds right. Being truthful is not the same thing as sounding right. And they are very, very good at sounding right.

The problem isn’t that AI lies. It’s that it doesn’t know when it doesn’t know. So it fills the gap anyway. Smoothly. Convincingly. And if you’re not watching for it, you carry that gap straight into your work.

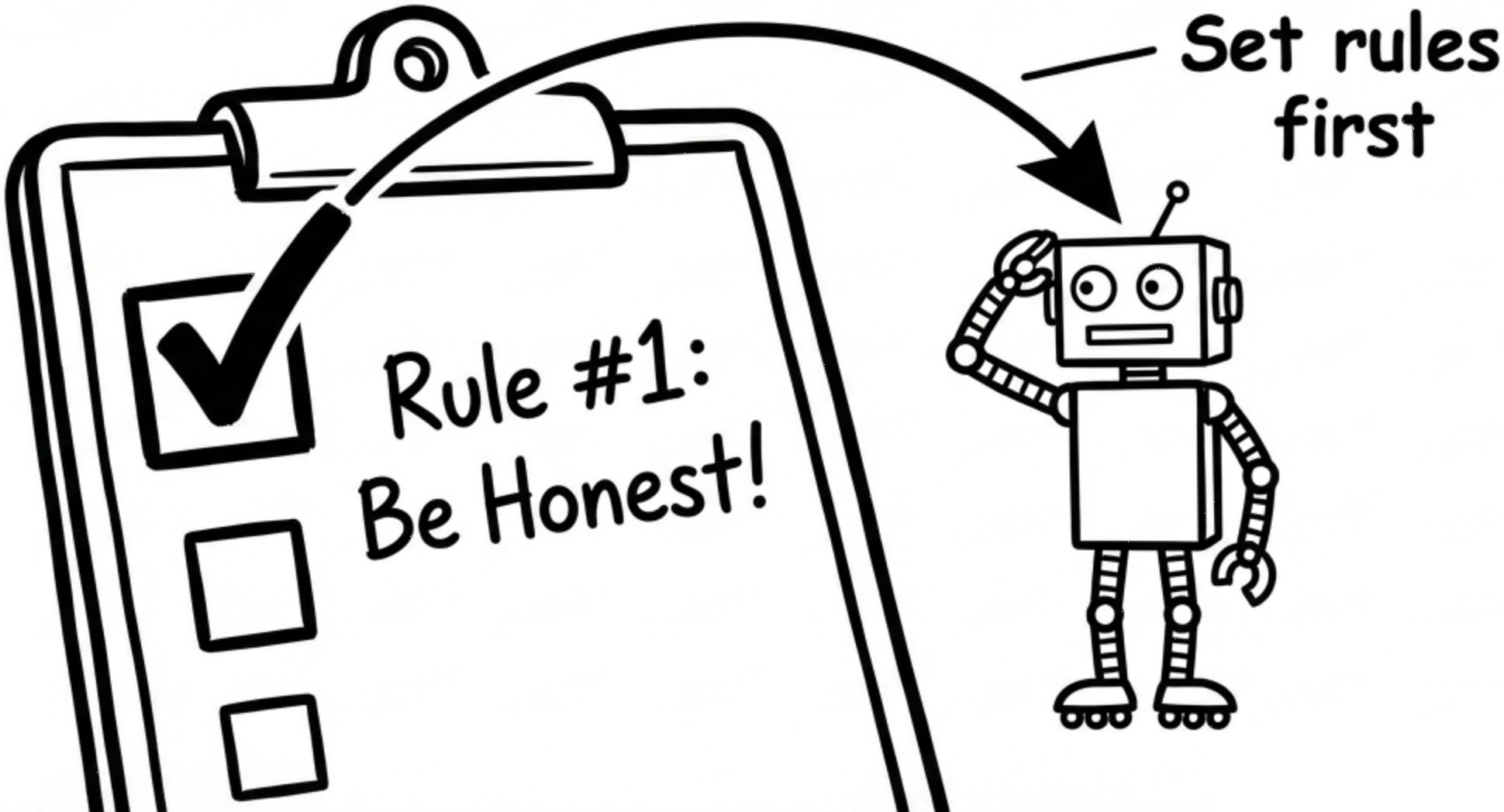

Tell It How To Behave

First line matters: define honesty rules before you ask anything else.

Most people open a chat and just ask their question. I did that for months.

Better: before you ask anything, tell it how to behave.

Example prompt:

“You are a cautious research assistant. If you are not at least 80% confident, say ‘I am not confident’ and tell me what you’d need to know. Never make up sources or numbers.”

This one line changes a lot. It tells the model that saying “I don’t know” is allowed. It gives it a way out that isn’t bluffing. Without this, it will always pick sounding confident over admitting it’s lost.

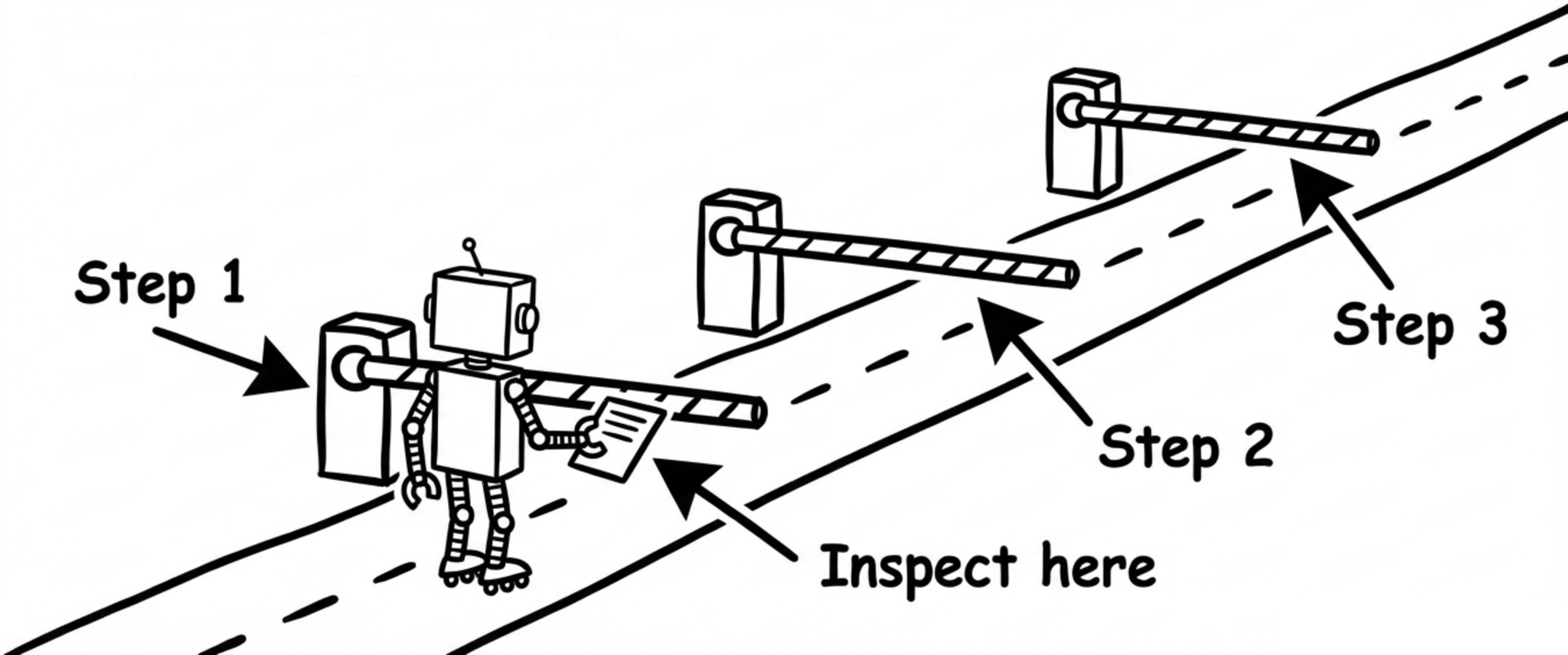

Pin It Down With Checkpoints

Force the model to think in steps you can inspect and question.

When you ask for a final answer, the model can hide a lot of guessing inside it. You get one clean paragraph and no idea how shaky the thinking underneath was.

So ask for steps instead.

Example prompt:

“Answer in three parts: 1) what the question is really asking, 2) the assumptions you’re making, 3) the final answer, plus how confident you are and why.”

Now you can see exactly where it might be going wrong. Wrong assumption in step 2? Push back on it. The guessing is no longer invisible. That’s the whole point.

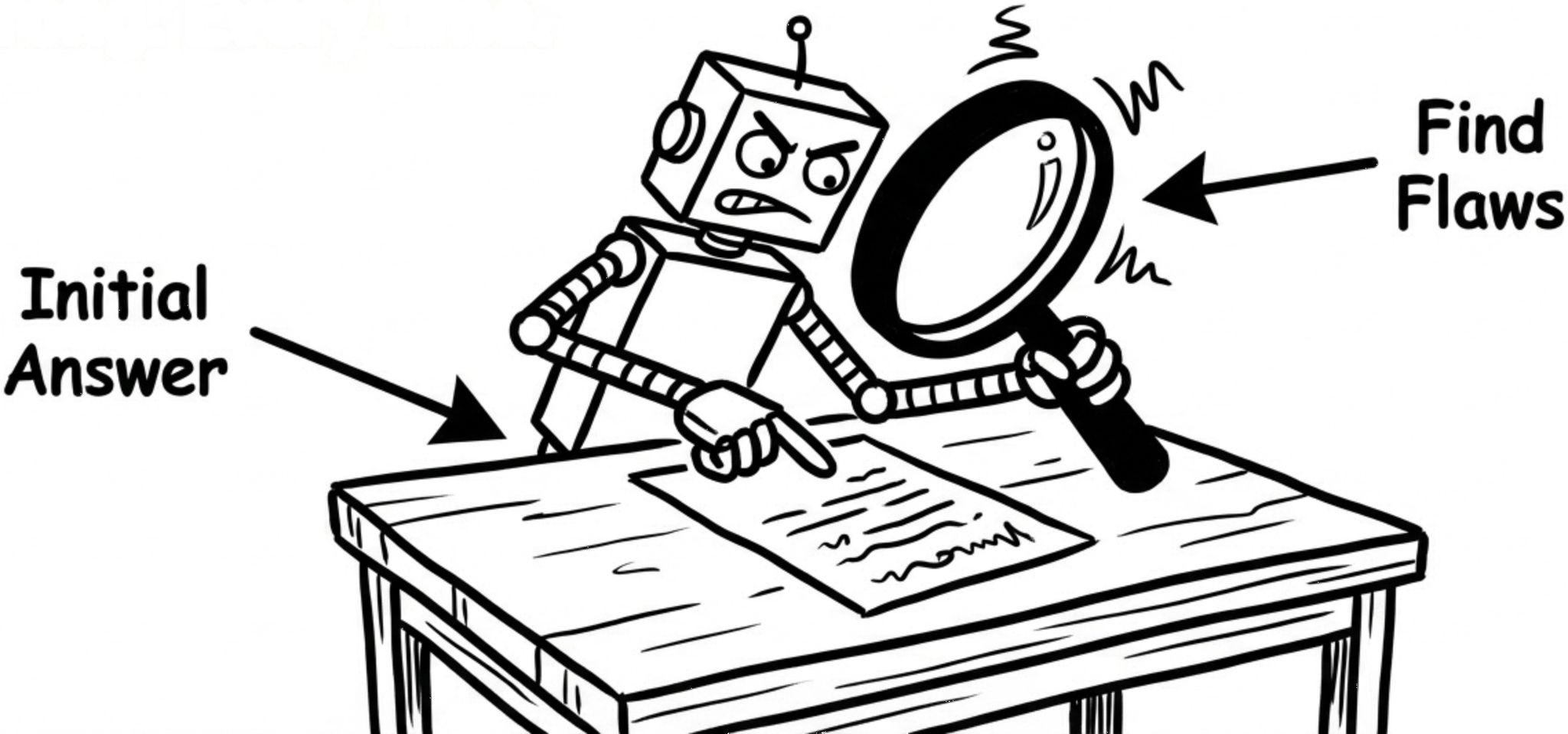

Ask It To Attack Itself

After an answer, ask for ways it could be wrong. Every time.

This is the one I use most now.

Once it gives me an answer, I don’t stop there. I ask it to come after itself.

Example prompt:

“Now act as a skeptic. Give me the top 5 reasons your answer might be wrong. For each one, tell me how I could check.”

It works because the model that just explained something confidently is now trying to break it. It finds gaps the first answer skipped. Missing data. Edge cases. Assumptions it treated as facts.

You can even loop it: “Fix the biggest risk you just listed.” That’s how you turn a first draft into something you can actually trust.

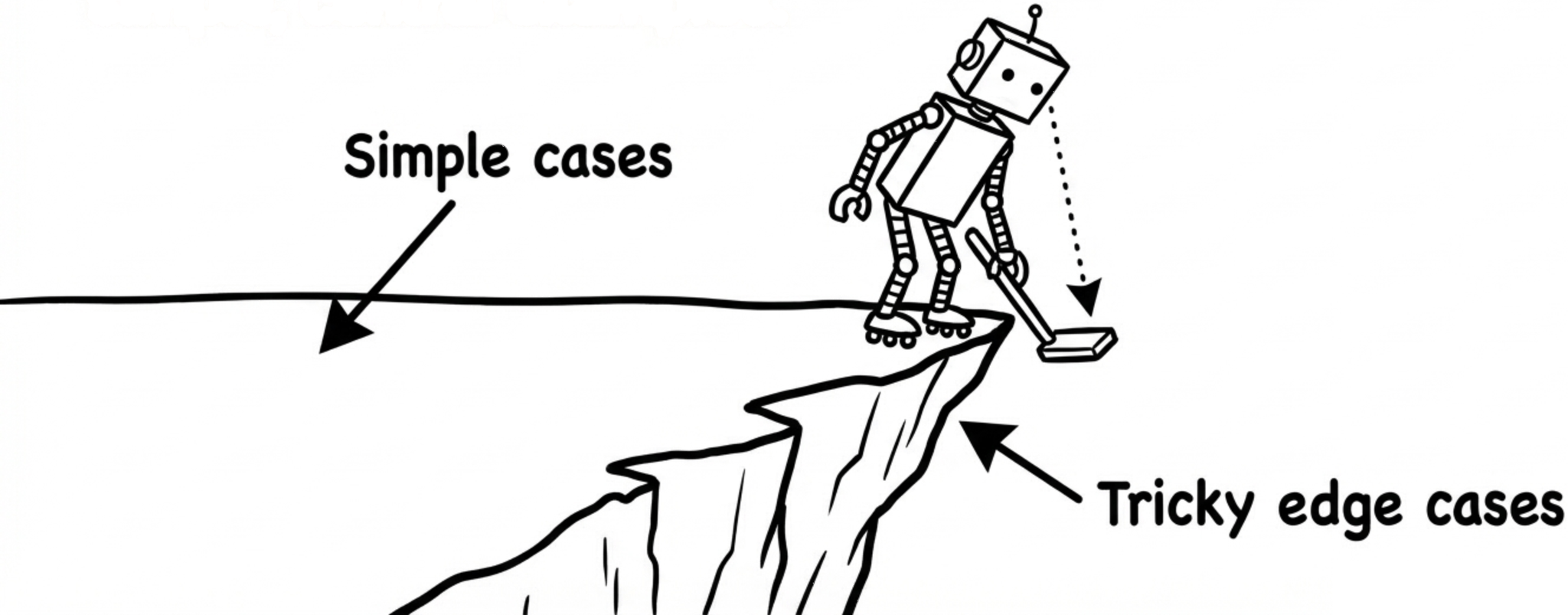

Probe The Edges, Not The Middle

Test it with tricky edge cases instead of only simple, central examples.

AI is most dangerous at the edges — old data, rare situations, very specific details. The main answer usually sounds fine. It’s the corners where things quietly fall apart.

After any main answer, I ask things like:

“Give me an example where this breaks.”

“Where would experts disagree on this?”

“What changes if this is from five years ago?”

If the model suddenly gets vague or starts contradicting what it just said — that’s the sign. The clean first answer was hiding something messy underneath.

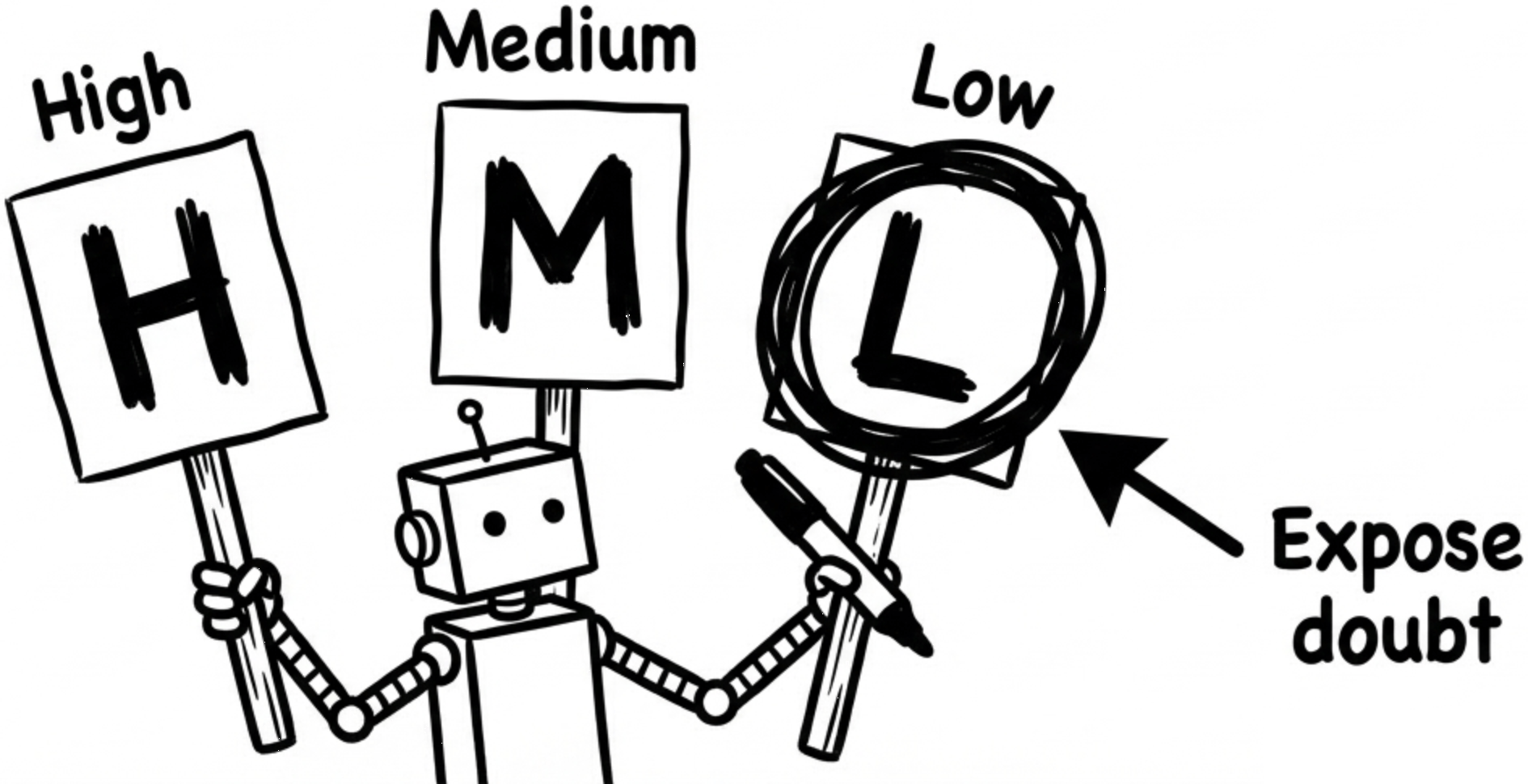

Make It Admit When It’s Guessing

Make it tag each answer as high, medium, or low confidence.

AI hides uncertainty inside smooth sentences. One way to stop that is to force it to label what it actually knows.

Example prompt:

“For every answer, start with CONFIDENCE: High / Medium / Low. One sentence on why. If it’s Medium or Low, tell me what’s missing.”

High means you can probably use it as a starting point. Low means go verify it yourself before it ends up in your work with your name on it.

I learned that last part the hard way.

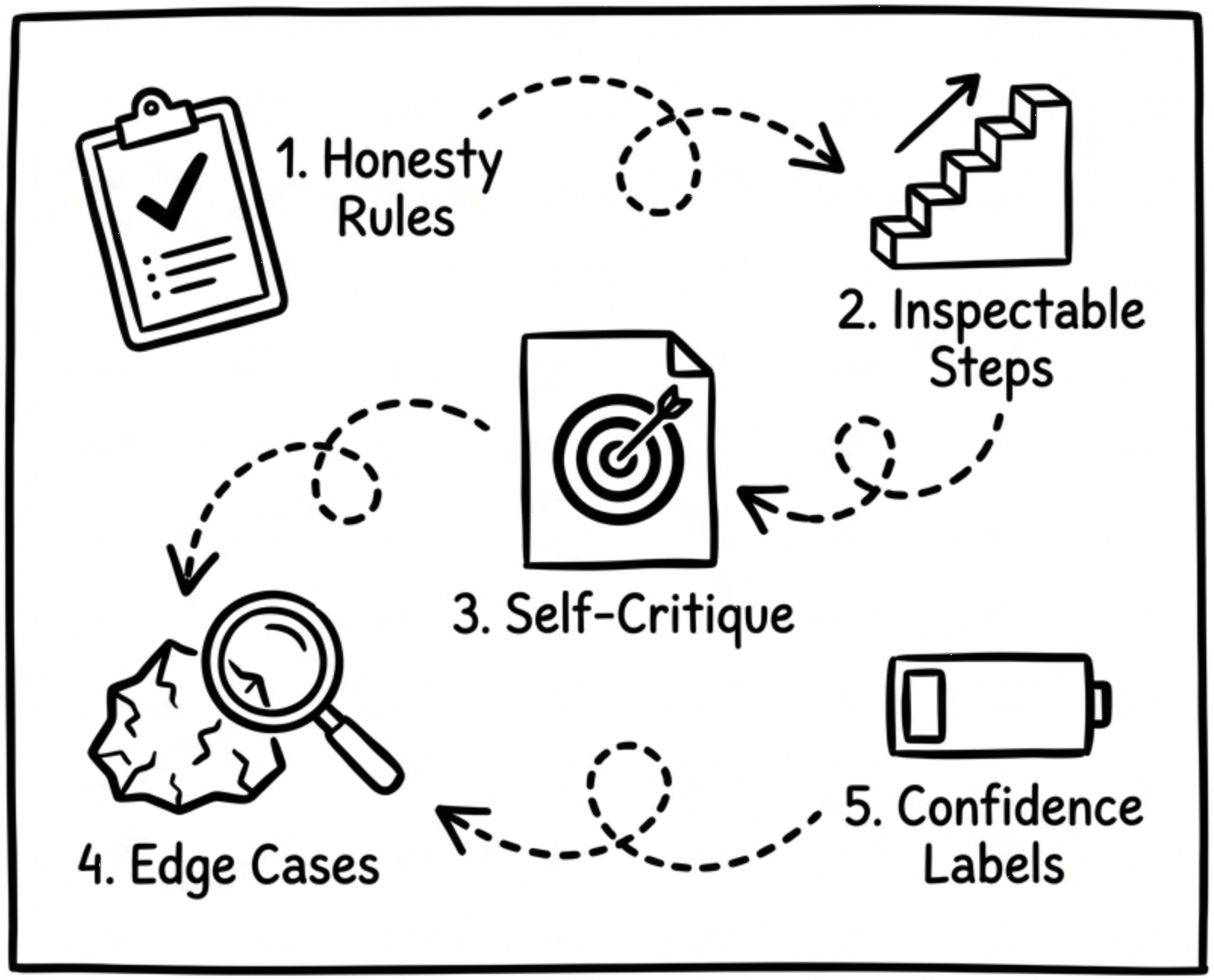

TL;DR: The Honest AI Playbook

-

Set strict honesty rules before asking real questions

-

Break answers into steps you can inspect and challenge

-

Make the model list ways it might be wrong

-

Push on edge cases to expose hidden hallucinations

-

Require confidence labels so doubt becomes visible

🎯 Why It Matters

The model isn’t trying to trick you. It just can’t tell the difference between knowing something and sounding like it knows something. That’s your job now. These five moves are how I do it.