Why your model never says “I don’t know” — and how to force it

AI didn’t betray us by hallucinating. We betrayed ourselves by believing the confidence in its voice. These systems were trained to sound sure, not to know when they’re lost. Once you see it as a fast, eager intern instead of a wise expert, everything about how you prompt it has to change. This is the playbook I wish I had before I quietly imported its mistakes into my work.

How To Make AI Admit “I Don’t Know”

AI sounds confident even when it’s guessing. Treat it like a cautious intern.

From Oracle To Intern

AI is a fast guesser, not a careful expert. Your job is to slow it down.

That confident AI answer you just got is mostly a best guess, not a sworn statement. Large language models are built to continue text in a way that sounds right. They are not built to track truth by default.

So you have to treat the model like a smart but overconfident intern. It wants to impress you, so it fills gaps instead of saying “no idea”.

Your job is to force it into honesty mode. You do that by adding constraints, asking for step‑by‑step checks, and using questions that try to break its answer. Done right, you can push the model to admit uncertainty before you trust what it says.

Tell It How To Behave

First line matters: define honesty rules before you ask anything else.

Most people jump straight to their question. Better: start with ground rules.

Example prompt:

“You are a cautious research assistant. If you are not at least 80 percent confident, say ‘I am not confident’ and list what you would need to know. Never make up sources or numbers.”

This tells the model that uncertainty is allowed and expected. It also gives it a phrase to use instead of bluffing.

You can tweak the confidence bar: 60 percent for brainstorming, 90 percent for medical or legal topics. The key idea is simple: set the safety policy before you ask the question.

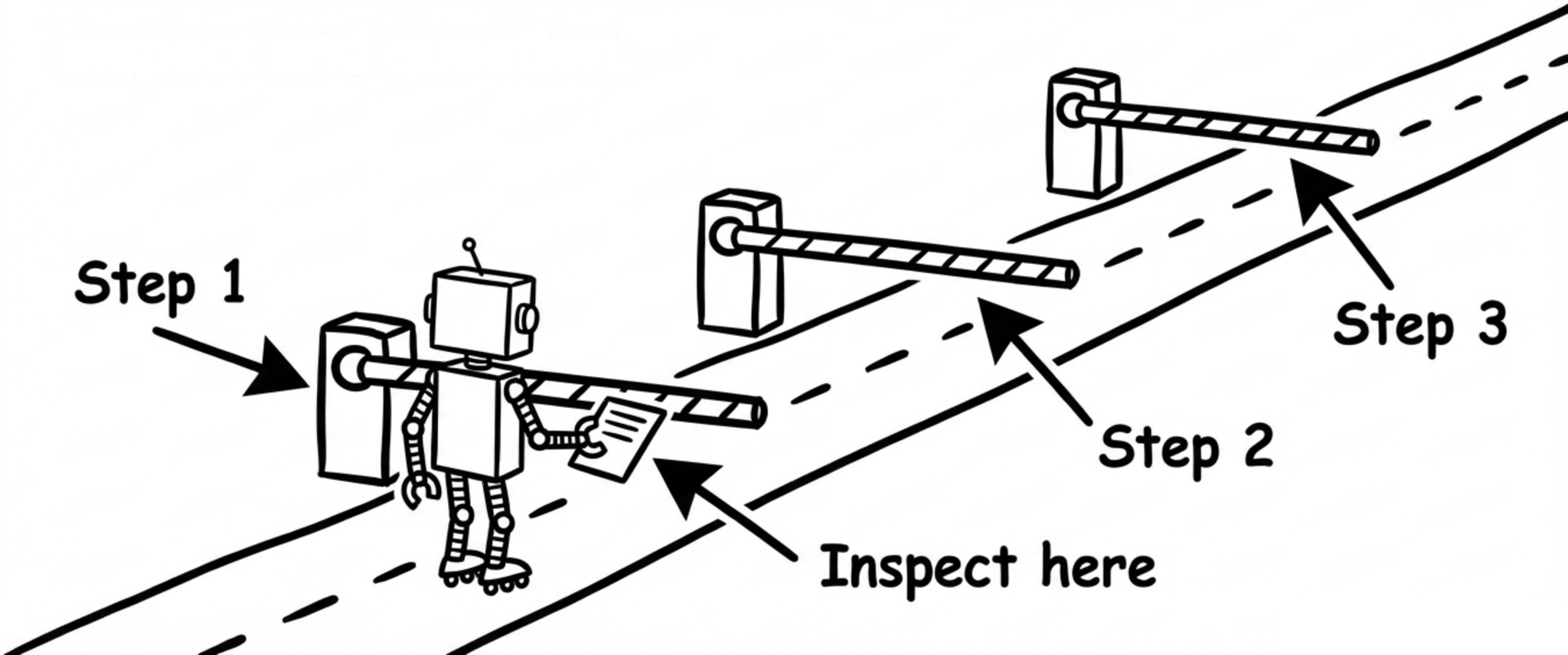

Pin It Down With Checkpoints

Force the model to think in steps you can inspect and question.

If you ask for a final answer only, the model can hide a lot of guessing. So ask for checkpoints.

Example prompt:

“Answer in three parts:

1) What the question is really asking

2) The assumptions you are making

3) The final answer, plus how confident you are and why”

Now you can see where it might be hallucinating: wrong assumption, missing data, or a leap in logic. You can then reply with: “Recheck step 2. That assumption is wrong because …”.

By slowing the model down, you turn invisible guessing into visible stepsyou can challenge.

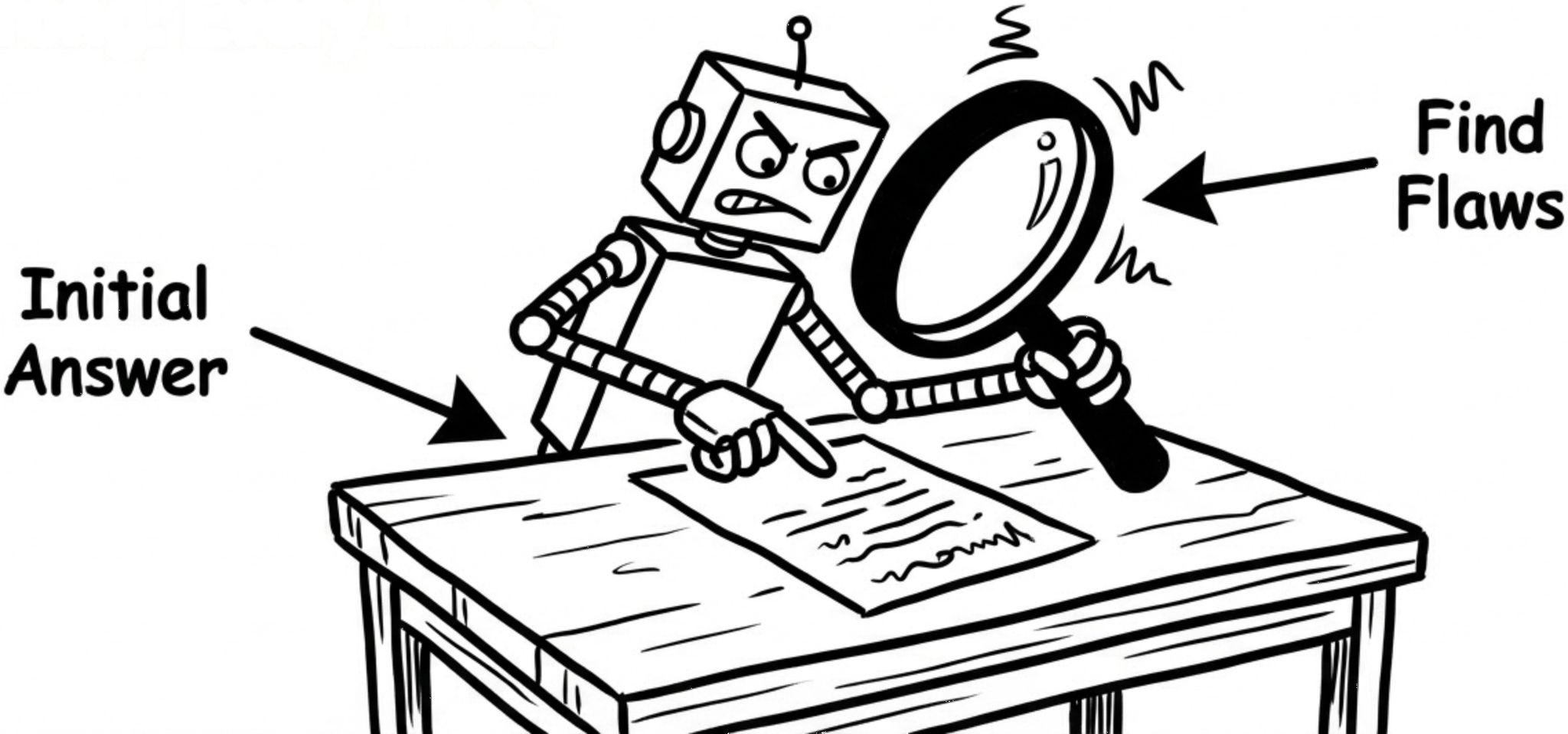

Ask It To Attack Itself

After an answer, ask for ways it could be wrong. Every time.

Once the model gives an answer, do not stop there. Ask it to argue against itself.

Example prompt:

“Now act as a skeptic. List the top 5 reasons your answer might be wrong or incomplete. For each, say how we could check it.”

This simple move flips the model from “confident explainer” to “critical reviewer”. It often surfaces missing data, edge cases, or alternative explanations that the first answer skipped.

You can even run a loop: “Update your answer, fixing the biggest risk you just listed.” This is how you bake in doubt as a feature, not a bug.

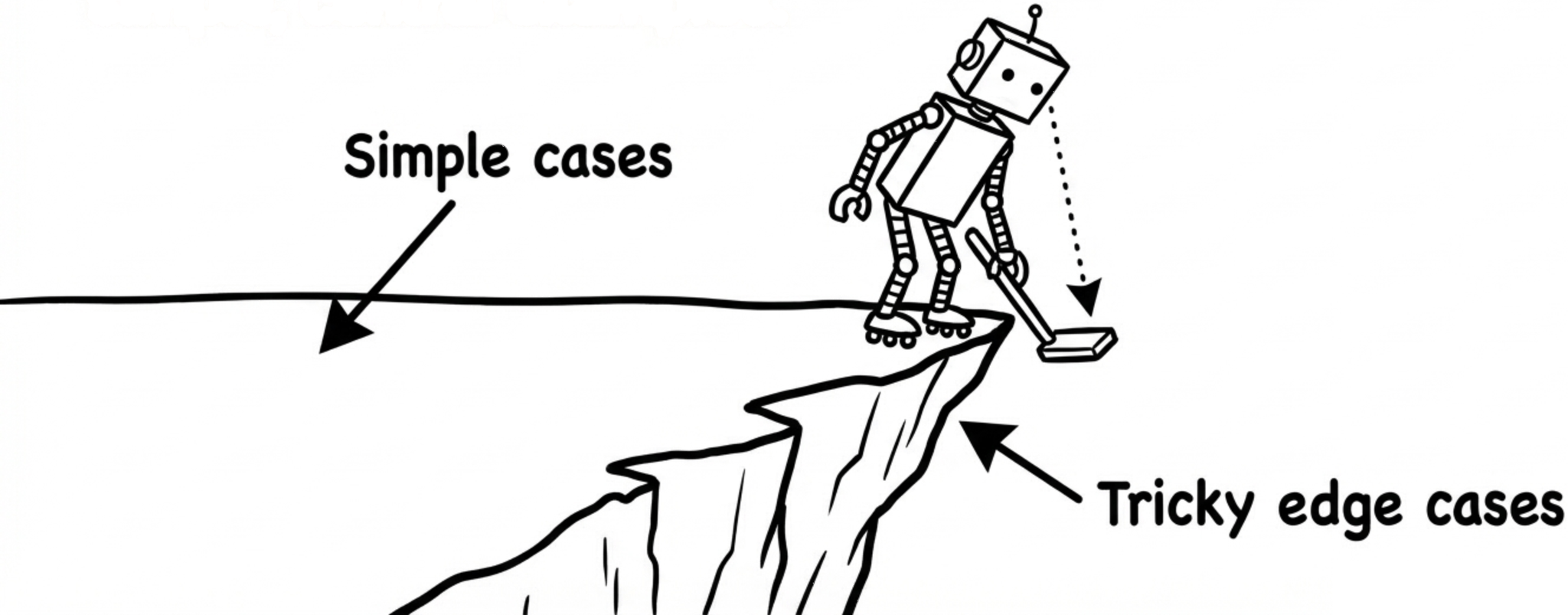

Probe The Edges, Not The Middle

Test it with tricky edge cases instead of only simple, central examples.

Hallucinations often show up at the edges of a topic: rare cases, old data, or very specific details.

So after a main answer, ask things like:

“Give me an example where this rule breaks.”

“Describe a situation where experts would disagree on this.”

“What would change if this was in 2012 instead of now?”

These questions push the model into the messy parts of reality. If it suddenly becomes vague or contradicts itself, that is a sign you should not trust the clean first answer.

You are basically stress‑testing the model, the way you would stress‑test a new hire.

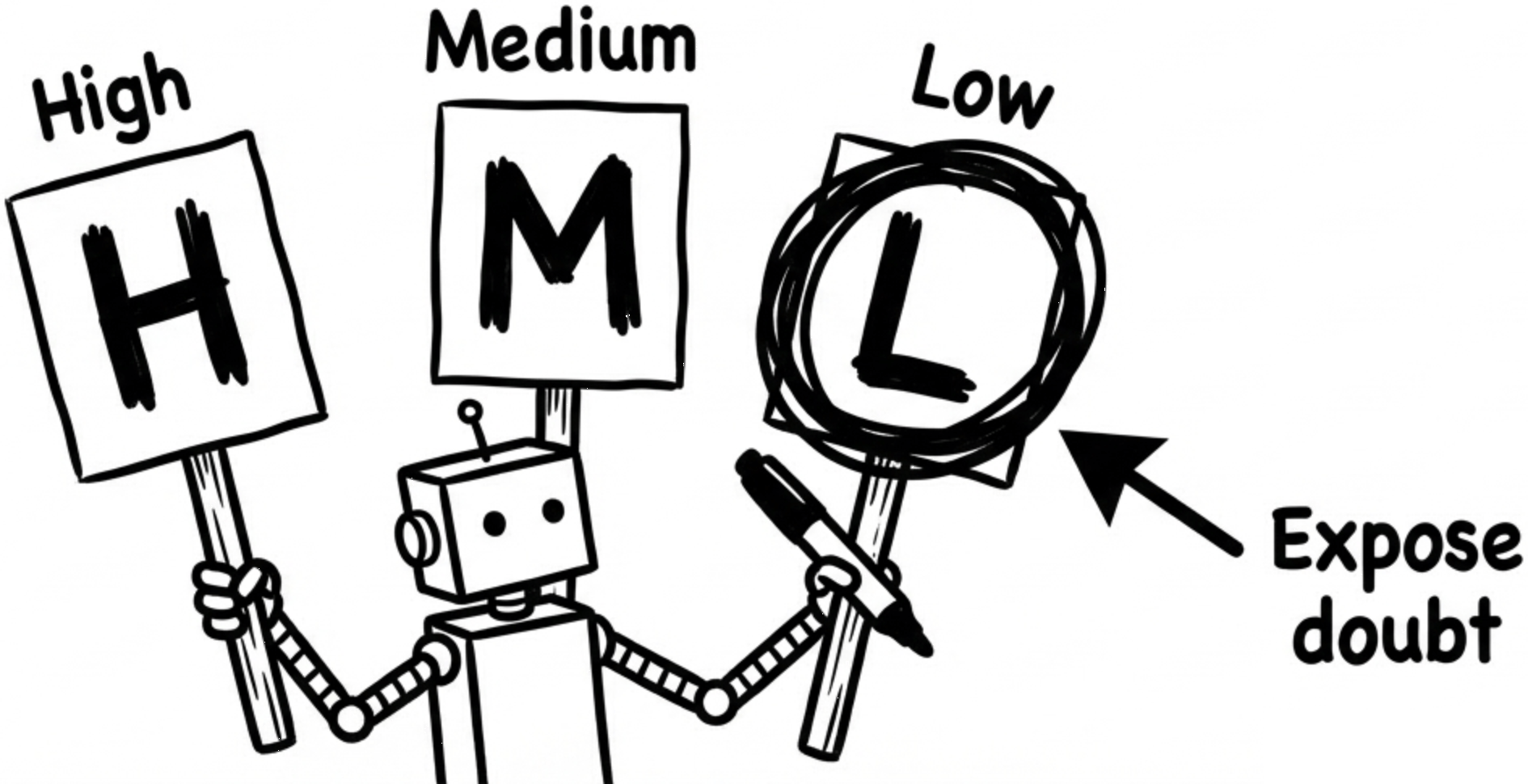

Force A Clear Confidence Label

Make it tag each answer as high, medium, or low confidence.

AI often hides uncertainty inside smooth language. So force it to label how sure it is.

Example prompt:

“For every answer, start with CONFIDENCE: High / Medium / Low, then explain why in one sentence. If confidence is Medium or Low, say what data is missing.”

This does two things. First, it makes the model think about its own limits. Second, it gives you a quick visual cue: High might be fine for a draft, Low means “do real research”.

Over time, you will start to notice patterns in when the model is shaky, and you can avoid those traps earlier.

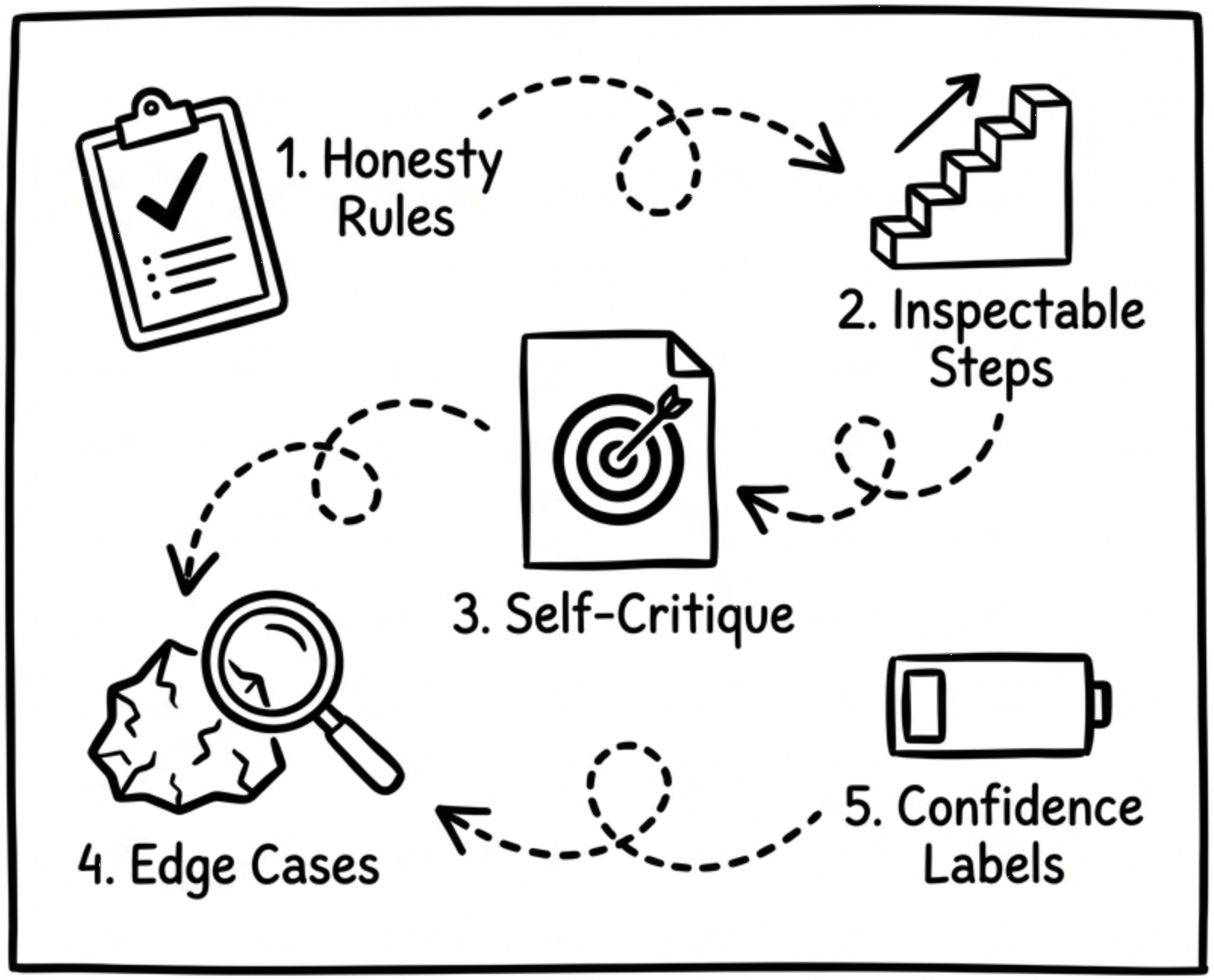

TL;DR: The Honest AI Playbook

Set strict honesty rules before asking real questions

Break answers into steps you can inspect and challenge

Make the model list ways it might be wrong

Push on edge cases to expose hidden hallucinations

Require confidence labels so doubt becomes visible

🎯 Why It Matters

If you do not manage AI’s confidence, you quietly import its mistakes into your work.