Why your smooth first answer collapses on the second question and what that silence is trying to tell you.

I thought AI was making me sharper. Instead, it exposed how hollow my “understanding” really was. I could parrot flawless explanations, right up until someone asked a second, simple question—and everything fell apart. This essay sits in that brutal gap between repeating answers and rebuilding them in your own words, and why that gap is becoming the most dangerous blind spot in how we learn now.

The difference between understanding something and being able to explain it

AI can explain anything for you. That silence after the second question is yours.

The Feynman Gut-Punch

You don’t know it if you can’t explain it in your own words.

Sara has a big meeting. She asks ChatGPT to explain “blockchain” and copies the neat answer into her slides. In the room, she sounds smooth while reading it.

Then someone asks a simple follow-up: “So… why can’t we just edit a block later if there’s a mistake?” Sara freezes.

That gap is what physicist Richard Feynman pointed at. Real understandingmeans you can explain an idea simply, in your own words, to someone who doesn’t know it. Reading or repeating an explanation is not the same thing.

Two Saras, One Room

One Sara repeats AI’s answer. The other builds her own.

Picture two versions of Sara in that meeting.

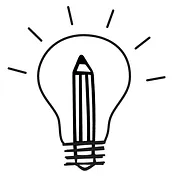

Sara A read the AI answer twice. She can repeat sentences almost word for word. As long as nobody interrupts, she sounds sharp.

Sara B used the AI answer differently. She read it, then closed the tab and tried to rewrite the idea on a blank page, as if explaining it to her 10-year-old cousin.

Same tool, totally different result. Sara A has fluent repetition. Sara B has the start of real understanding. You can hear the difference the moment someone says, “Wait, can you give a simple example?”

The Follow-Up Question Trap

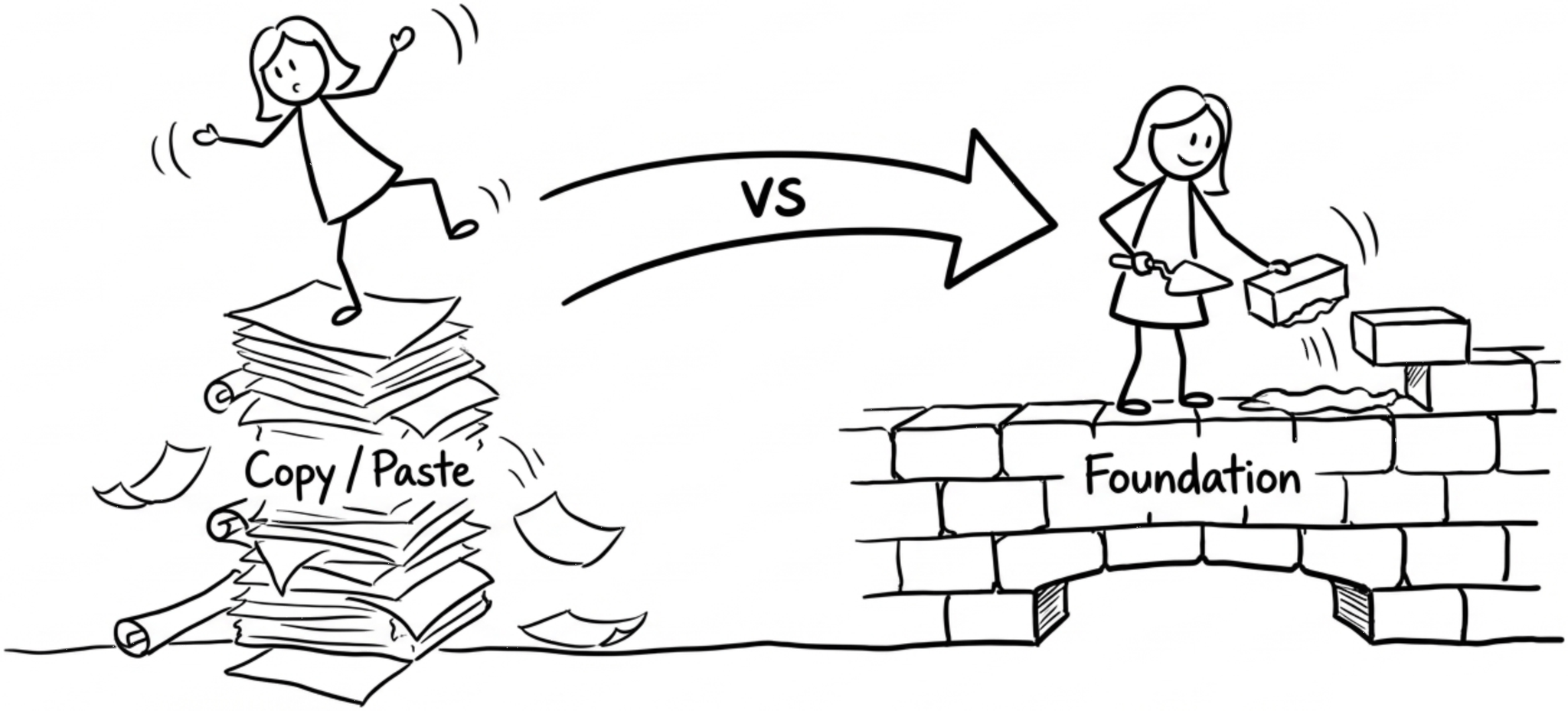

Fluent fakers break on the second question, not the first.

In Sara’s meeting, the first question is easy. It matches a sentence she remembers from the AI answer. She parrots it back and everyone nods.

Then comes the second question: “What would happen if one computer in the network lies?” There is no matching sentence in her memory. Now she has to think.

Follow-up questions expose where your own mental model stops. A mental model is your internal picture of how something works. If that picture is thin, you run out of words fast.

Rebuild, Don’t Just Reread

Close the tab. Now explain it to an imaginary 10-year-old.

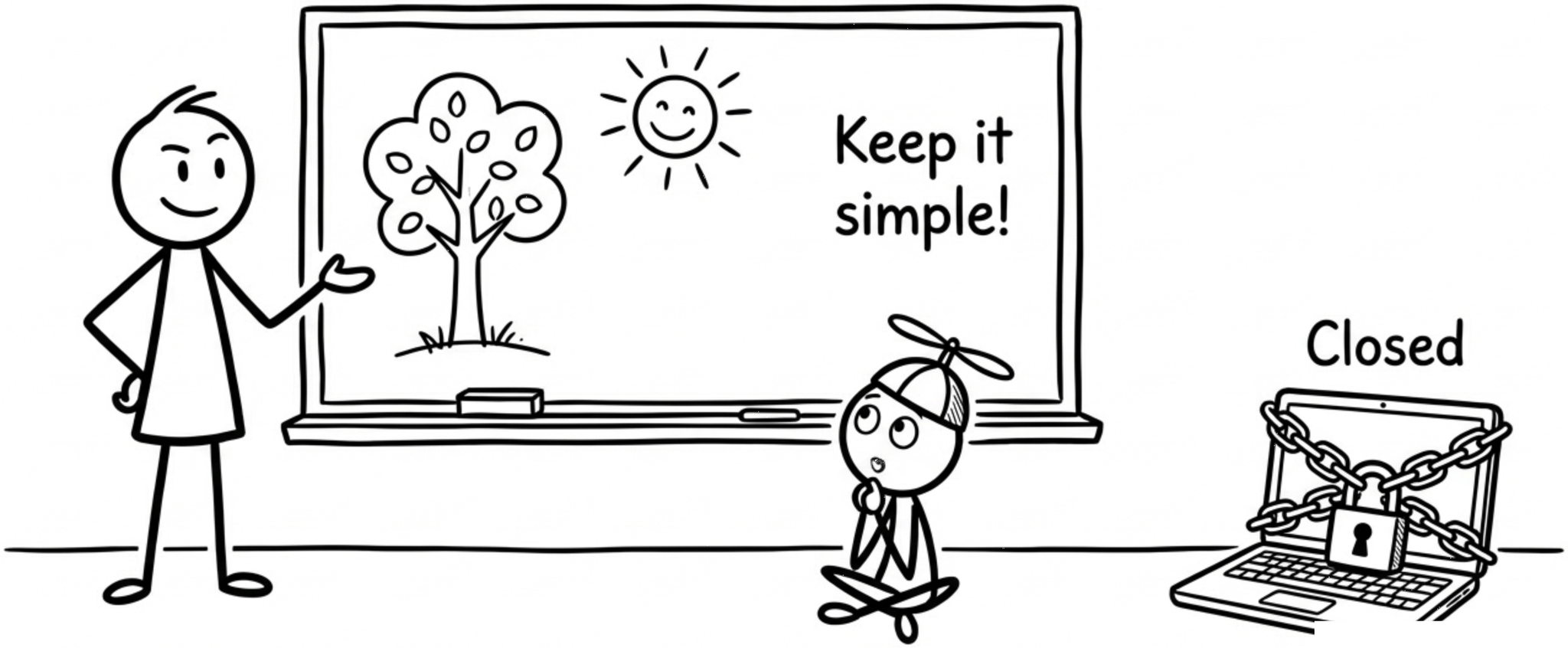

Here is a simple Feynman-style move you can steal from Sara B.

Ask AI for an explanation of the topic.

Read it once. No highlighting. No rereading.

Close the window.

On paper, explain it as if to a curious 10-year-old who keeps saying “Why?”.

When you get stuck, mark the gap. That is where you do a targeted search or ask AI again, but only about that missing piece. You are not copying any more. You are rebuilding the idea in your own brain.

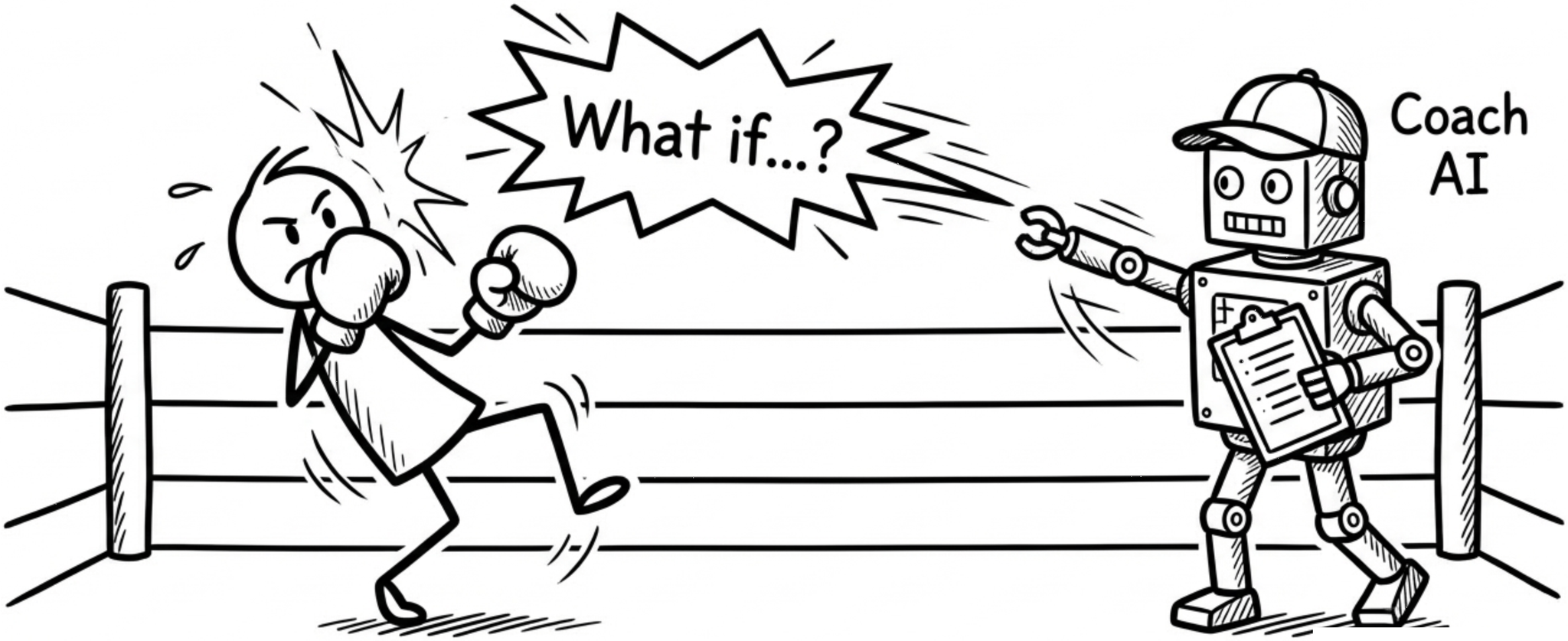

Use AI As A Sparring Partner

Let AI ask you questions instead of always answering yours.

Flip the usual script. Instead of asking, “Explain Kubernetes to me,” try this:

“I’ll explain Kubernetes to you like I’m 14. Your job is to:

point out unclear parts

ask 3 follow-up questions

highlight anything that sounds memorized, not understood.”

Then you write your explanation first.

Now AI is not your crutch. It is your sparring partner. It pushes on weak spots and asks the annoying questions your future boss, client, or student will ask. You still have to think.

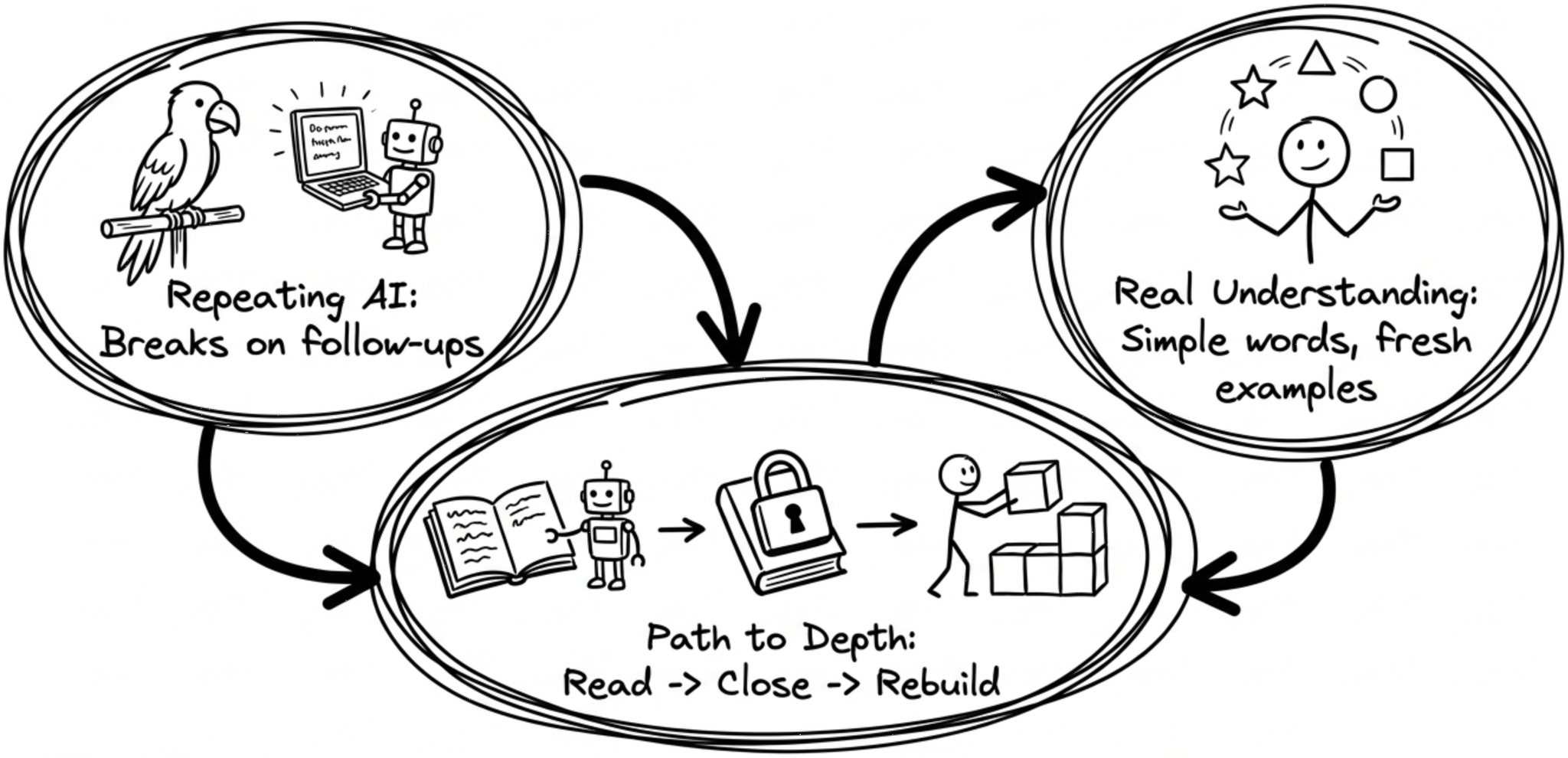

TL;DR: Spot The Real Understanding

Repeating AI: smooth first answer, silence on follow-ups, no own examples

Real understanding: simple words, fresh examples, can handle “what if” questions

Path to depth: read with AI, then close it and rebuild explanation yourself

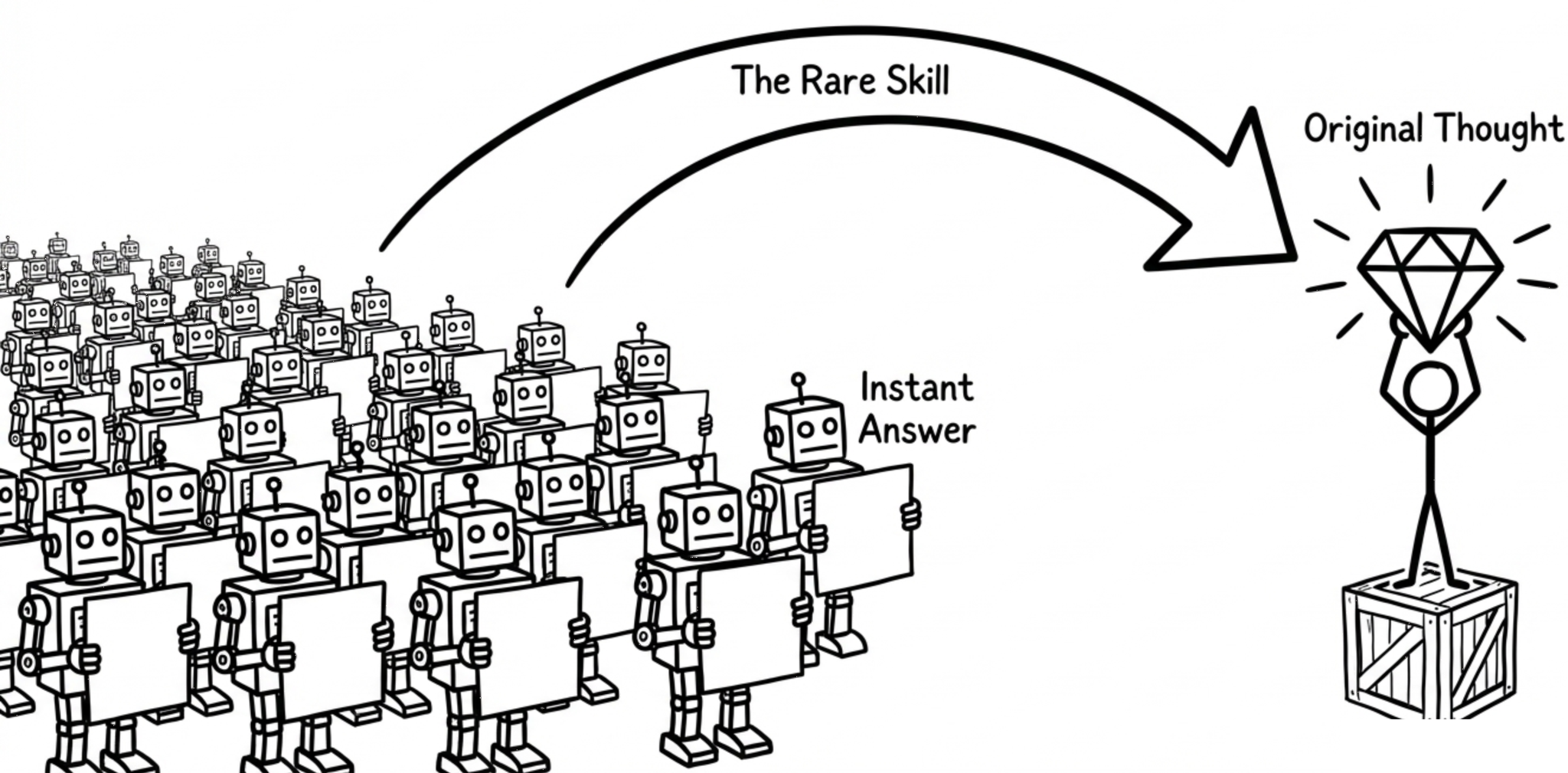

🎯 Why It Matters

In a world full of instant answers, the scarce skill is original understanding.

Today, pick one idea you “know.” Explain it without help. The stall is the answer.